Data labeling and annotation for AI: Best practices for high-quality training data

Artificial intelligence is only as good as the data that shapes it. In today's businesses, artificial intelligence is driving change across industries — from healthcare and finance to retail and logistics. Behind all successful AI applications is a core foundation: data labeling and annotation for AI. Without clear data preparation for AI, even the best algorithms miss the mark.

AI models learn by example. They depend on high-quality training data to recognize patterns, spot anomalies, and make accurate decisions. The quality of machine learning training data directly impacts AI model accuracy, no matter the industry or use case.

Forward-thinking teams don't treat data preparation for AI as an afterthought. They work to improve data quality for AI from the start, making sure every label is trusted and every annotation is consistent. When your data science team invests in robust, reliable datasets, you build confidence in every artificial intelligence project.

This article explains why quality data labeling and data annotation best practices are central to AI development. You'll see how top teams boost AI model accuracy and unlock more value from machine learning training data. By focusing on data quality for AI, you'll set your AI applications apart from the competitors.

Understanding data labeling and annotation for AI

Let's break down the basics of data management. Data labeling and annotation for AI refer to the process of adding meaningful tags to data, which gives artificial intelligence a clear foundation for learning. This is vital for every stage of machine learning.

There are many types of data to prepare. In supervised learning, teams manually assign labels that guide the algorithm. For text heavy projects with big data processing, NLP data labeling highlights important entities, sentiments, or intent within sentences. In image projects, computer vision annotation helps identify and classify objects or outline regions of interest.

Here's how managing data annotation projects might look for a real-world team:

- Data engineers gather diverse raw information.

- Specialists perform data transformation, converting formats or removing duplicate entries.

- Annotators use data annotation best practices, such as step-by-step instructions and consistent labeling rules, to create high-quality machine learning training data.

The quality of your definitions, processes, and checks will determine how well your AI learns — right from the start.

Understanding these practical tasks prepares your team for the next step: exploring how high-quality training data directly influences the accuracy and strength of your AI model and then AI applications.

The impact of high-quality training data on AI model accuracy

Why does high-quality training data matter so much? In AI development, the difference between good and great often comes down to your data management. When every record is clear and consistent, you set the stage for accurate results and long-term success.

Consider these core reasons:

- AI model accuracy starts with data management. Clean, well-labeled datasets give machine learning algorithms real structure, helping them understand patterns instead of noise. High-quality training data removes confusion so your model learns what's important.

- Reliable data means robust AI models. When you sample from a wide, representative pool, your models adapt better to new situations. That means fewer surprises when real people use your AI applications in production.

- Data quality for AI drives performance improvements. Consistent, accurate input reduces errors and false outputs. With data annotation best practices, your model will require less re-training and yield more dependable predictions.

- Strong data quality standards power every area of data science. Good practices go beyond one-time tuning — they influence collaboration, documentation, and even compliance in regulated industries.

- Incremental improvements add up. Small changes in data quality for AI — clearer labels, more diverse examples, or extra validation — can make a measurable difference. They lead to model performance improvement you can see and prove.

High-quality training data is never a “nice-to-have” in machine learning. For every AI project management, it is the backbone that determines how well your system can solve business problems.

Now that the stakes are clear, the next step is learning how successful teams make sure their data is ready for the challenges ahead. Let's look at the data annotation best practices that deliver consistent results.

Data annotation best practices for AI development

Great results in artificial intelligence always start with smart processes. Data annotation best practices help teams produce high-quality training data that fuels leading AI applications. Here's how expert data science teams make every labeled record count:

Base your AI development on clear guidelines.

Set out detailed instructions for data annotators. Ambiguous rules create mistakes — clear data management guidelines help your team deliver reliable machine learning training data.

Invest in strong data management.

Organize your annotation workflow from the start. Teams that actively monitor data quality for AI spot problems early, so mistakes never get buried.

Adopt a human-in-the-loop AI approach.

Combine automated checks with careful human review, especially for challenging cases in supervised learning. This blend increases both speed and accuracy.

Test for consistency and accuracy.

Regularly run checks on annotated samples. Look for errors, missed elements, or off-standard results. This quality control ensures every dataset meets your high-quality training data standards.

Prioritize ongoing training.

Give data annotators feedback, coaching, and clear examples. This continuous learning process raises the bar for data annotation best practices across your whole organization.

Consider your AI application's context.

Tailor your annotations to the needs of your artificial intelligence use case. For example, speech projects require very different labels from vision or text projects.

Document every data management decision.

Track changes and keep a log of your labeling rules. Solid record-keeping in data management helps your team repeat successes — or adjust fast when outcomes change.

Every step above feeds into better machine learning training data and sharper AI results. But even the strongest AI project management needs the right technology. Next, let's explore the data science tools and services that bring annotation workflows to life.

Data labeling tools and services

The right technology takes data annotation best practices and makes them scalable. Today, teams choose from a growing range of data labeling tools and data labeling services — each approach fits different needs, budgets, and project sizes.

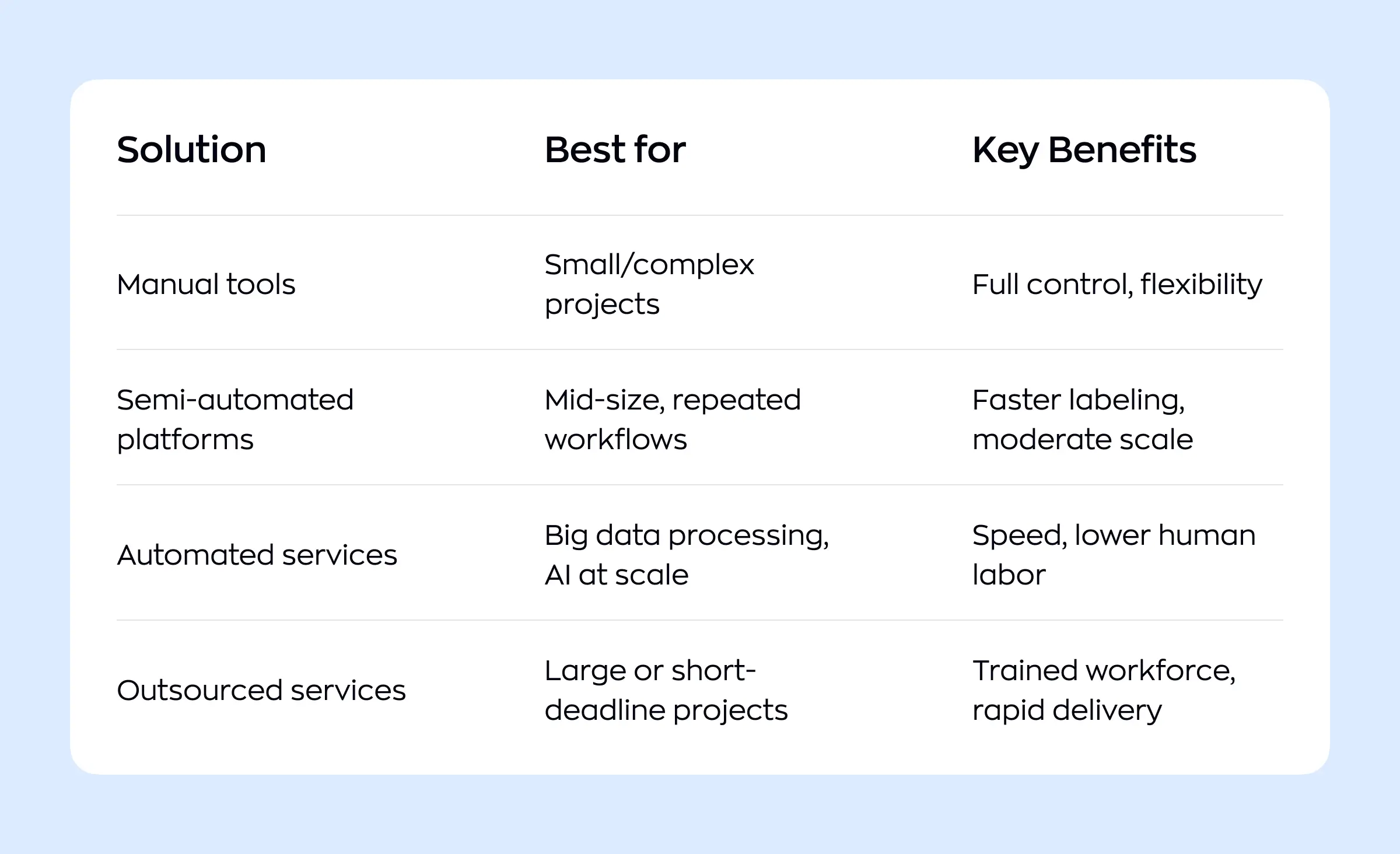

Here's how the main options compare:

Leading data labeling tools offer features for quality checks, integration with big data processing, and seamless data management. Some teams rely on data labeling services for fast scaling and access to specialized talent. Good AI project management means choosing the right blend for your goals and monitoring cost, speed, and accuracy throughout the process. Let's expand each point.

Manual tools

Manual data labeling tools are typically open source or lightweight commercial apps that give teams granular control over how they implement data annotation best practices. They are widely used for computer vision and document use cases, where ML engineers and data managers want to iterate quickly on label taxonomies before committing to large-scale production workflows.

Common examples include:

CVAT for image and video annotation with advanced manual and semi-automated tools.

LabelImg and LabelMe for bounding boxes and polygons in smaller computer vision projects.

VIA (VGG Image Annotator) and similar browser-based tools for quick, local experiments with data science teams.

This approach fits teams that prioritize full control, on-premise deployment, and tight coupling with internal data management policies, even if that means less automation and more manual effort.

Semi-automated platforms

Semi-automated data annotation platforms add AI-assisted workflows, collaboration, and governance on top of manual labeling. They often include pre-labeling models, task queues, review stages, and role-based permissions, which makes them a good fit for managing data annotation projects across multiple annotators and reviewers.

Representative platforms:

Labelbox, with unified data and model management plus active learning and analytics for AI project management.

Encord, with multimodal support, strong QA tooling, and integration into production-grade MLOps environments.

SuperAnnotate and Dataloop, combining annotation, data management, and automated QA rules in one environment.

These tools integrate with cloud storage and training pipelines so big data processing jobs can continuously pull newly labeled data, retrain models, and feed feedback back into the annotation loop.

Automated services and ML-assisted workflows

Automated services push further into AI-assisted labeling, focusing on speed and volume for large-scale datasets. In this model, auto-labeling models, smart sampling, and active learning are used to pre-label most of the data, while human reviewers correct and audit edge cases.

Examples and capabilities:

Labellerr, designed for high-speed AI-assisted labeling across diverse data types at enterprise scale.

Encord and similar platforms offer automated QA rules, model-assisted annotation, and feedback loops between models and annotators.

Data curation layers that sit on top of existing tools, using active and self-supervised learning to prioritize the most informative samples for human review.

For data science and ML teams, this model ties directly into big data processing environments where data streams continuously and annotation is part of a closed-loop training pipeline.

Outsourced and managed services

Outsourced data labeling services package the tooling, workforce, and project operations into one offering. These data science vendors recruit and train annotators, run quality control, manage guidelines, and deliver labeled datasets under service-level agreements, which can be critical for time-sensitive AI applications.

ML engineers and data managers are critical here — they pick the best tool or data labeling services for the job, set up workflows, and keep AI project management running smoothly. Your data science team will depend on these choices as they shape, train, and iterate on machine learning models.

Matching the right solution to your project provides clean, accurate data every step of the way. As your team expands its labeling capabilities, it's just as important to have strong oversight and coordination. Next, we'll look at successful AI project management at scale.

Managing data annotation projects at scale

Scaling data annotation means solving new problems in consistency, coordination, and oversight. Here's how experienced teams handle large, complex projects, involving big data processing, and produce high-quality data for robust AI models.

Establish roles and clear processes

All successful AI applications start with a team structure. Assign data managers to handle daily workflow and quality checks. Involve data engineers early — they manage data transformation, automate data pipelines, and handle integrations needed for big data processing.

Enforce data annotation best practices

Set annotation rules and definitions in writing, making sure all annotators use the same standards. Standard operating procedures allow teams to review work and maintain high data quality for AI, even as task volume increases.

Build smart workflows with automation

For big data processing, manual checks alone can't keep up. Use AI project management tools to assign tasks, schedule regular reviews, and log every change. Active learning for labeling lets algorithms surface ambiguous records for human judgment. This approach saves time and directs attention where it matters most.

Prioritize data governance AI requires

As data grows, so does risk. Rolling out a data governance AI framework protects privacy and traceability. Version data, log key decisions, and schedule audits — these steps limit errors and help with compliance.

Communicate and iterate

Continuous feedback matters. Schedule brief daily check-ins and gather input from annotators. Update documentation quickly as new edge cases appear.

Scaling a data annotation project takes more than increasing headcount — it means building systems that make growth sustainable. With a strong foundation in place, your team is ready to tackle specialized tasks like computer vision and NLP annotation, each with its own unique challenges.

Specialized annotation tasks

AI applications often demand unique annotation approaches, depending on the type of data and use case. The variety and complexity of these tasks shape both machine learning training data and final model results.

Computer vision annotation

In computer vision annotation, specialists draw boxes, polygons, or masks around objects in images or video frames. This process helps artificial intelligence systems identify features like faces, vehicles, or defects in products. High-quality computer vision annotation is a must for self-driving cars, medical imaging, and smart surveillance. Consistent, precise labels here are core to data quality for AI and model performance improvement in visual tasks.

NLP data labeling

Text-based AI, such as chatbots or search engines, relies on careful NLP data labeling. Annotators tag sentences for sentiment, highlight entities — like names or dates — and categorize topics. For supervised learning tasks, labeled examples show models how to separate fact from opinion or recognize intent in a customer message. Inaccurate NLP data labeling can easily introduce subtle errors — tight guidelines are essential to prevent them.

Synthetic data and advanced methods

Some AI applications lack enough real-world examples, especially for rare situations. In these cases, teams turn to synthetic data generation. This process creates artificial samples to round out existing datasets, improve balance, and strengthen machine learning training data. Synthetic data can boost model performance improvement where real data is limited, but it also introduces new quality control challenges.

Every domain calls for a unique approach to data labeling and annotation for AI. Whether working with images, text, or synthetic data, strategic annotation powers better, safer AI systems. Next, we'll tackle how to keep up high standards through data quality and governance across every phase of your project.

Providing data quality for AI projects and governance in AI development

As AI projects move from pilots to production, maintaining trustworthy, well-governed data becomes a strategic advantage — protecting both system performance and organizational reputation.

Set up multi-team oversight structures.

Centralize data governance AI into a group with leaders from data management, compliance, and product. This prevents siloed decisions and keeps standards consistent across all AI development.

Schedule regular, independent audits.

Commit to external or cross-team reviews targeting dataset drift, documentation gaps, and policy violations. Routine audits promote trust and continuous enhancement of data quality for AI.

Build in risk registers for machine learning failures.

Treat any critical failure as an incident with clear ownership for root-cause analysis — whether caused by faulty sources, process issues, or scaling challenges. This bolsters transparency and accountability.

Use adaptive data pipelines for future growth.

Design pipelines to flex as your business or rules change, so big data processing can easily adopt new checks, integrate fresh sources, or meet updated governance standards.

Design for explainability and compliance from day one.

Provide traceability and justified decisions throughout. Robust AI models should always be supported by records that pass audits and satisfy evolving regulations.

By moving from short-term fixes to systematic controls, your AI initiatives become future-proof and resilient. Next, we'll explore how these foundations set the stage for a comprehensive, business-aligned AI strategy.

Designing an AI strategy centered on high-quality training data

A winning AI strategy doesn't start with algorithms — it starts with a commitment to the best possible data. Every part of AI development, from planning to deployment, relies on the right approach to gathering and managing machine learning training data.

Build your strategy around quality

Successful leaders treat high-quality training data as a core business asset, not an afterthought. Focus on capturing diverse, clean, and relevant machine learning training data for every important use case. Invest early in processes that validate accuracy and represent a full range of real-world situations.

Align teams and roles

Data scientists, ML engineers, and data engineers all play distinct parts in the data journey. Data scientists pinpoint what information is needed and why. Data engineers architect data transformation pipelines to deliver it reliably and at scale. ML engineers bring expertise on annotation formats and model performance improvement, ensuring outputs link directly to business objectives.

Integrate the right AI project management and tools

Choose data labeling tools that match the complexity of your domain and workflow needs. The best solutions make it easy to monitor quality, automate simple decisions, and feed clean records back into the pipeline. Good AI project management builds review cycles, deadlines, and feedback into every phase — resulting in steady, predictable growth.

Make data transformation and quality central

Solid data transformation processes — cleansing, reformatting, augmenting — are non-negotiable for high quality. Schedule checks for data quality for AI after each change, knowing these steps are key for both compliance and user trust.

Keep strategy dynamic and measurable

An effective AI strategy never stands still. Use performance data and user feedback to adjust everything from data gathering to annotation and model monitoring. Track how each change in machine learning training data impacts model performance improvement, so you can prove the business value of every update.

AI development becomes scalable and sustainable when strategy, teams, tools, and quality checks move in sync from day one.

Conclusion

High-quality training data powers every breakthrough in artificial intelligence. When teams apply data annotation best practices and insist on clear, consistent inputs, they lay the groundwork for robust AI models that outperform expectations.

From healthcare to retail, leaders in data science and machine learning invest in quality at each stage. They know today's efforts shape tomorrow's AI applications — turning ideas into valuable, real-world solutions.

AI development is about how you collect, prepare, and manage the data that fuels your big data processing and teaches algorithms. With careful stewardship, each dataset becomes a strategic asset — and your organization is ready to capture the full promise of artificial intelligence.

By making data quality a focus, every team can deliver AI applications that are accurate, ethical, and ready for whatever comes next.

Ready to unlock the value of data science for your business? Contact the Ronas IT team to discover how we can support your AI development goals. Fill out a short form below.

Related posts

Related Services

React Native App Development Services

Save time and costs with Ronas IT's React Native app development, allowing cross-platform capabilities for iOS and Android. Our team has built over 30 apps across various sectors, ensuring rapid development, flexible maintenance, and cost-effective solutions.

MVP Development Services

Need to launch your startup quickly? Ronas IT offers urgent MVP development services, allowing you to get a fully-functional app in just 1-3 months. Ideal for testing business ideas, presenting to investors, or entering the market swiftly. Benefit from our extensive experience and accelerated development process.

Custom Mobile App Development

Transform your business with Ronas IT's custom mobile app development. We create tailored UI/UX designs, write clear and efficient code, and ensure seamless releases to Google Play and the App Store. Our experienced team delivers high-performance, secure apps within 3-4 months.